1 Chair of Econometrics and Statistics, esp. in the Transport Sector, Technische Universität Dresden, Dresden, Germany

2 Center for Scalable Data Analytics and Artificial Intelligence (ScaDS.AI) Dresden/Leipzig, Germany

Deep Reinforcement Learning (DRL) has provided inspiring solutions to various complex tasks in different research fields, but the application of DRL agents to the real world is still a challenge due to the established discrepancies between the simulation and the real world. In this work, we propose a DRL framework to train a lane following and overtaking agent in the simulation that can be transferred to the real-world environment effectively with minimal effort. For evaluation, we designed different driving scenarios in the simulation and real-world environment to assess the lane following and overtaking capabilities of the DRL agent and compare the proposed agent with other baselines. With the proposed framework, the Sim2Real gap is narrowed, thus, the trained agent can drive the vehicle with similar performance in simulation and its real counterpart.

| Maps | Median metric over 10 episodes | DRL agent (slow mode) 1 | DRL agent (fast mode) 1 | PID baseline 2 |

|---|---|---|---|---|

| Normal 1 | Survival Time [s] 3 | 60 | 60 | 60 |

| Traveled distance [m] | 33.75 | 62.27 | 33.17 | |

| Lateral deviation [m∙s] | 1.25 | 2.56 | 1.69 | |

| Orientation deviation [rad∙s] | 5.04 | 9.24 | 3.86 | |

| Major infractions [s] | 0.99 | 0.38 | 0.25 | |

| Normal 2 | Survival Time [s] | 60 | 60 | 60 |

| Traveled distance [m] | 34.06 | 53.42 | 32.99 | |

| Lateral deviation [m∙s] | 2.00 | 2.54 | 2.02 | |

| Orientation deviation [rad∙s] | 6.60 | 8.87 | 6.07 | |

| Major infractions [s] | 0.41 | 0.70 | 0.20 | |

| Plus track | Survival Time [s] | 60 | 60 | 60 |

| Traveled distance [m] | 32.51 | 52.88 | 33.14 | |

| Lateral deviation [m∙s] | 2.07 | 2.68 | 2.10 | |

| Orientation deviation [rad∙s] | 6.77 | 9.35 | 5.96 | |

| Major infractions [s] | 1.23 | 0.82 | 0.10 | |

| Zig Zag | Survival Time [s] | 60 | 60 | 60 |

| Traveled distance [m] | 32.95 | 56.46 | 33.98 | |

| Lateral deviation [m∙s] | 1.67 | 2.82 | 2.24 | |

| Orientation deviation [rad∙s] | 6.45 | 7.96 | 7.14 | |

| Major infractions [s] | 0.12 | 0.63 | 0.27 | |

| V track | Survival Time [s] | 60 | 60 | 60 |

| Traveled distance [m] | 32.38 | 51.77 | 32.94 | |

| Lateral deviation [m∙s] | 1.87 | 2.95 | 2.63 | |

| Orientation deviation [rad∙s] | 7.63 | 10.15 | 9.15 | |

| Major infractions [s] | 0.12 | 0.90 | 0.00 | |

| [1] By adjusting the scale of action output, we can switch between fast and slow driving modes.

[2] The PID baseline utilizes the exact information from the simulator, which is not available for the other agents. [3] The maximum evaluation time in one episode is 60s. |

||||

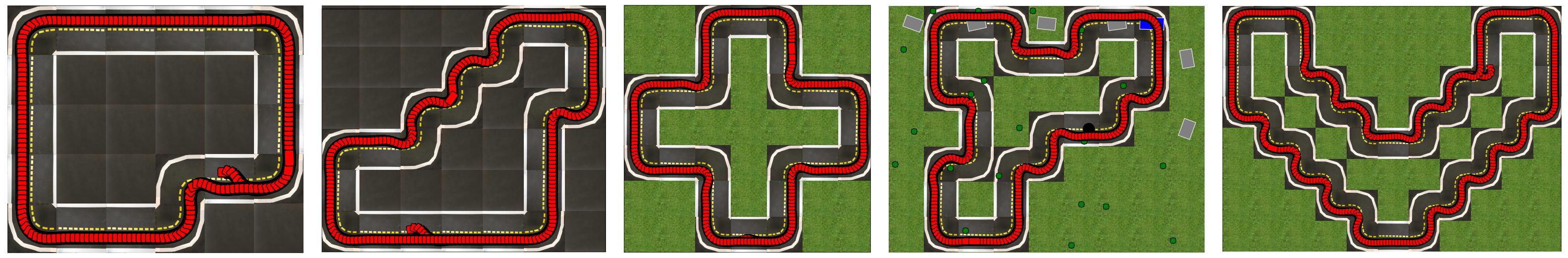

RL agent (slow mode) in different evaluation maps in simulation:

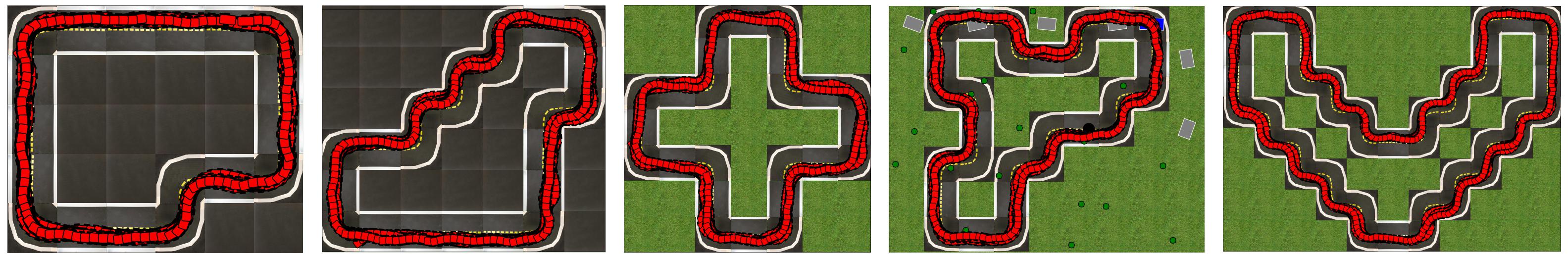

RL agent (fast mode) in different evaluation maps in simulation:

The full version of evaluation results videos is avaliable on .

.jpg)

Currently, the human baseline database consists of 25 human players.

Final score = Survival Time + Traveled distance - Lateral deviation - 0.5 * Orientation deviation - 1.5 * Major infractions

| Metrics | First Place (Martin W.) | Second place (Neringa) | Third Place (Fabian) | DRL agent* (fast mode) |

DRL agent* (slow mode) |

|---|---|---|---|---|---|

| Final score | 104.01 | 83.66 | 80.81 | 114.52 | 88.50 |

| Survival Time [s] | 60 | 60 | 60 | 60 | 60 |

| Traveled distance [m] | 55.82 | 38.70 | 38.88 | 62.27 | 33.75 |

| Lateral deviation [m∙s] | 3.92 | 4.31 | 4.12 | 2.56 | 1.25 |

| Orientation deviation [rad∙s] | 10.18 | 10.56 | 12.80 | 9.24 | 5.04 |

| Major infractions [s] | 1.87 | 3.63 | 5.03 | 0.38 | 0.99 |

| * The final score of the DRL agent is based on the median value of each metric, while for human players the best performance is utilized to compute the final score. | |||||

| Metrics | First Place (Martin W.) | Second place (Neringa) | Third Place (Luca) | DRL agent* (fast mode) |

DRL agent* (slow mode) |

|---|---|---|---|---|---|

| Final score | 92.39 | 80.59 | 80.57 | 105.40 | 88.15 |

| Survival Time [s] | 60 | 60 | 60 | 60 | 60 |

| Traveled distance [m] | 49.03 | 30.17 | 41.66 | 53.42 | 34.06 |

| Lateral deviation [m∙s] | 3.92 | 3.34 | 4.17 | 2.54 | 2.00 |

| Orientation deviation [rad∙s] | 12.54 | 10.67 | 10.62 | 8.87 | 6.60 |

| Major infractions [s] | 4.30 | 0.60 | 7.73 | 0.70 | 0.41 |

| * The final score of the DRL agent is based on the median value of each metric, while for human players the best performance is utilized to compute the final score. | |||||

| Metrics | First Place (Martin W.) | Second place (Luca) | Third Place (Dianzhao) | DRL agent* (fast mode) |

DRL agent* (slow mode) |

|---|---|---|---|---|---|

| Final score | 93.86 | 92.02 | 84.09 | 104.30 | 85.21 |

| Survival Time [s] | 60 | 60 | 60 | 60 | 60 |

| Traveled distance [m] | 47.45 | 45.24 | 30.89 | 52.88 | 32.51 |

| Lateral deviation [m∙s] | 3.74 | 3.88 | 2.67 | 2.68 | 2.07 |

| Orientation deviation [rad∙s] | 12.31 | 10.78 | 8.27 | 9.35 | 6.77 |

| Major infractions [s] | 2.47 | 2.63 | 0.00 | 0.82 | 1.23 |

| * The final score of the DRL agent is based on the median value of each metric, while for human players the best performance is utilized to compute the final score. | |||||

| Metrics | First Place (Martin W.) | Second place (Luca) | Third Place (Niklas) | DRL agent* (fast mode) |

DRL agent* (slow mode) |

|---|---|---|---|---|---|

| Final score | 88.70 | 83.58 | 82.19 | 108.72 | 87.88 |

| Survival Time [s] | 60 | 60 | 60 | 60 | 60 |

| Traveled distance [m] | 45.71 | 41.11 | 38.50 | 56.46 | 32.95 |

| Lateral deviation [m∙s] | 3.97 | 4.24 | 4.04 | 2.82 | 1.67 |

| Orientation deviation [rad∙s] | 14.38 | 13.08 | 12.78 | 7.96 | 6.45 |

| Major infractions [s] | 3.90 | 4.50 | 3.77 | 0.63 | 0.12 |

| * The final score of the DRL agent is based on the median value of each metric, while for human players the best performance is utilized to compute the final score. | |||||

| Metrics | First Place (Martin W.) | Second place (Niklas) | Third Place (Dianzhao) | DRL agent* (fast mode) |

DRL agent* (slow mode) |

|---|---|---|---|---|---|

| Final score | 88.32 | 84.91 | 80.04 | 102.40 | 86.52 |

| Survival Time [s] | 60 | 60 | 60 | 60 | 60 |

| Traveled distance [m] | 43.76 | 38.16 | 29.39 | 51.77 | 32.38 |

| Lateral deviation [m∙s] | 3.82 | 3.92 | 3.34 | 2.95 | 1.87 |

| Orientation deviation [rad∙s] | 13.92 | 13.25 | 11.71 | 10.15 | 7.63 |

| Major infractions [s] | 3.10 | 1.80 | 0.10 | 0.90 | 0.12 |

| * The final score of the DRL agent is based on the median value of each metric, while for human players the best performance is utilized to compute the final score. | |||||

The full version of evaluation results videos is avaliable on .

| PID basline | DRL agent |

The full version of evaluation results videos is avaliable on .

| Vehicles | Driving direction | Control algorithm | Lateral deviation1 | Orientation deviation1 | Average Velocity [m/s] | Infraction2 | ||

|---|---|---|---|---|---|---|---|---|

| Mean [m] | Stdv. [m] | Mean [rad] | Stdv. [rad] | |||||

| Vehicle 1 | Outer ring | PID baseline | -0.0301 | 0.0427 | -0.1208 | 0.2064 | 0.4369 | 0 |

| DRL agent | -0.0232 | 0.0423 | -0.2496 | 0.2808 | 0.6999 | 0 | ||

| Inner ring | PID baseline | -0.0194 | 0.0716 | 0.0107 | 0.4513 | 0.4371 | 8 | |

| DRL agent | -0.0609 | 0.0375 | 0.1993 | 0.4059 | 0.6004 | 0 | ||

| Vehicle 2 | Outer ring | PID baseline | -0.0467 | 0.0509 | -0.0944 | 0.2776 | 0.4399 | 2 |

| DRL agent | -0.0466 | 0.0359 | -0.1292 | 0.2401 | 0.7289 | 0 | ||

| Inner ring | PID baseline | -0.0790 | 0.0512 | 0.1654 | 0.4050 | 0.4400 | 3 | |

| DRL agent | -0.0614 | 0.0446 | 0.1597 | 0.4039 | 0.6056 | 1 | ||

| Vehicle 3 | Outer ring | PID baseline | -0.0024 | 0.0773 | -0.4321 | 0.3821 | 0.4413 | 0 |

| DRL agent | 0.0209 | 0.0312 | -0.1033 | 0.2160 | 0.6423 | 0 | ||

| Inner ring | PID baseline | -0.0528 | 0.0471 | 0.1898 | 0.3031 | 0.4427 | 2 | |

| DRL agent | 0.0079 | 0.0618 | 0.2461 | 0.4507 | 0.5814 | 0 | ||

| [1] The lateral and orientation deviation in this table are not the exact value in the real world but the output from the perception module.

[2] Infraction in the real-world evaluation is counted whenever the agent drives off the road and needs to be relocated on the track by a human. |

||||||||

The full version of evaluation results videos is avaliable on .

This work was funded by ScaDS.AI (Center for Scalable Data Analytics and Artificial Intelligence) Dresden/Leipzig.

|

|

|